Robots Making Robots, the Old-Fashioned Way: Why Hamilton's and Knuth's Methods Are Essential to AI-Assisted Development

I keep my hands dirty. Director title be damned — if I'm going to lead development teams building AI-powered systems, I need to know what the tools actually do, and most of all how well they do it (or don’t). So I built something. And because I spend enough time in the weighty world of Complex Rehab Technology, I decided to build a game. Along the way I affirmed that the fundamentals of engineering are still key in this new era of software development, and developed a paradigm I call Literate Agentic Programming.

My project is called Z-Forge and it's an interactive fiction generator. (Yes, the Z is for Zork despite the current build using the Ink engine.) But before I tell you what it does and how I built it, I should tell you about its predecessor, because the industry and I have both come a long way in eleven months.

Last year I built TADA — Text Adventure Diamond Age — using a simpler spec-driven approach and what I'll generously call the LLM-as-dungeon-master architecture. Context limits and the absence of guardrails caught up with me fast. On the bright side, I got to share an early scenario with a veteran of the interactive fiction world, based on a sci-fi novel I've been working on. He was kind enough to play through it despite his misgivings about AI, document exactly how he made it go off the rails and send me the pictures. Meet your heroes. Sometimes they're genuinely nice.

TADA taught me what I needed: pre-compiled world knowledge, retrieval-augmented generation, and real agentic tooling. Z-Forge is what happened when I applied those lessons.

What It Is

This part gets a little technical. If you care about how these systems work under the hood, read on. If not, you can skip ahead to “How it’s Built”—but you’ll miss some of why the approach works.

Z-Forge is written in Python using the Flet UI library. (But not by me! More on that later.) Ultimately, it’s able to run interactive fiction experiences in the open source Ink engine with an interface resembling a text message stream. But to get there, you have to build a World and then the Experience.

At a high level, Z-Forge separates knowledge, reasoning, and execution into distinct systems. To create a World – a hybrid RAG store using LanceDB for vectors, KuzuDB for a graph, and JSON for basic text -- you need to feed it a lot of data, free-form. For my prototypes, I used the fan wiki for the Wings of Fire books my daughter loves. You feed in the text, it’s broken into chunks for the vector store, including small, 1-2 sentence chunks and their larger context; the identified entities such as characters and locations are summarized by LLM using these chunks as input, and the summaries are also put into the vector store. At the same time, an LLM parser reads the text to build a graph, where each node maps to the same ID as one of the entity summary vectors for cross-reference. This builds an ontology specific to fiction, using “noun verb noun” triplets like “{character} is_a {species}”. Some key elements of the “fictional world” ontology include character, species, location, time period, item, culture, and event. The hybrid store is capped off with a JSON document giving some basic key-value pairs, including a title and a basic description of the world.

From there, you get access to the library. You can “ask about world” and a lightweight LLM gets access to the hybrid RAG store. A later build may make the ask more diegetic – if you put Discworld into the system, expect to hear “ook”. No conversation history, nothing complex; just an LLM that can make a tool call to answer your questions. Mainly a debugging tool to make sure the world data is coherent and accurate, and that the nouns and verbs of the Z-Forge fiction ontology are useful.

That takes you to Experience Generation. This is where we get into multi-agent work using LangGraph, with escalation and arbitration processes.

flowchart TD

%% Subgraph: State & Artifacts

subgraph State [State / Artifacts]

direction LR

S1[(Z-World KV Store)]

S2[(Player Profile)]

S3[(Outline - WIP)]

S3a[(Research Notes - Ref)]

S4[(Polish Draft - WIP)]

S5[(Ink Script - WIP)]

S6[(Validation Report)]

end

%% Subgraph: Agents

subgraph Agents [Agent Roles]

A1[Outliner]

A2[Technical Editor]

A3[Story Editor]

A4[Staff Writer]

A4r[Researcher]

A5[Junior Scripter]

A6[Senior Scripter]

A7[QA Analyst]

A8[Final Technical Reviewer]

A9[Arbiter]

end

%% Subgraph: External Tools

subgraph Tools [External Tools & Services]

T1_qw{{query_entities}}

T1_rs{{retrieve_source}}

T2{{Ink Compiler}}

end

%% Workflow Logic

Start((Player Prompt)) --> Node_Outline

%% Step 1: Outlining (Shift-Left)

Node_Outline[outline_author]

A1 -.-> Node_Outline

S1 & S2 --> Node_Outline

S3a --> Node_Outline

Node_Outline -- "Research Needed" --> Node_Outline_Res[outline_researcher]

A4r -.-> Node_Outline_Res

T1_qw & T1_rs --- Node_Outline_Res

Node_Outline_Res --> S3a

Node_Outline_Res --> Node_Outline

Node_Outline -- "Outline Ready" --> S3

Node_Outline -- "Outline Ready" --> S3a

Node_Outline -- "Invalid JSON" --> Node_Debug

Node_Outline -- "JSON Repair Failed" --> Fail((Exit))

Node_Review_Outline{outline_reviewer}

A2 & A3 -.-> Node_Review_Outline

S3 & S1 & S3a --> Node_Review_Outline

Node_Review_Outline -- "Logic Error (Tech Only)" --> Node_Outline

Node_Review_Outline -- "Story Editor Rejected" --> Node_Arbiter_Outline{arbiter_outline}

A9 -.-> Node_Arbiter_Outline

Node_Arbiter_Outline -- "Overruled" --> Node_Prose

Node_Arbiter_Outline -- "Upheld / Tech Also Fails" --> Node_Outline

Node_Review_Outline -- "Approved" --> Node_Prose

%% Step 2: Prose Polishing

Node_Prose[prose_writer]

A4 -.-> Node_Prose

S3 & S3a --> Node_Prose

Node_Prose -- "Research Needed" --> Node_Prose_Res[prose_researcher]

A4r -.-> Node_Prose_Res

T1_qw & T1_rs --- Node_Prose_Res

Node_Prose_Res --> S3a

Node_Prose_Res --> Node_Prose

Node_Prose -- "Draft Ready" --> S4

Node_Review_Prose{prose_reviewer}

A2 & A3 -.-> Node_Review_Prose

S4 & S1 & S3a --> Node_Review_Prose

Node_Review_Prose -- "Tone/Lore/Logic Fix" --> Node_Prose

Node_Review_Prose -- "Story Editor Rejected" --> Node_Arbiter_Prose{arbiter_prose}

A9 -.-> Node_Arbiter_Prose

Node_Arbiter_Prose -- "Overruled" --> Node_Script

Node_Arbiter_Prose -- "Upheld / Tech Also Fails" --> Node_Prose

Node_Review_Prose -- "Approved" --> Node_Script

%% Step 3: Scripting & Technical Loops

Node_Script[ink_scripter]

A5 -.-> Node_Script

S4 --> Node_Script

Node_Script --> S5

Node_Compile{Node: Ink_Compile_Check}

S5 --> Node_Compile

T2 --- Node_Compile

Node_Compile -- "Syntax Errors" --> Node_Debug[ink_debugger]

A6 -.-> Node_Debug

Node_Debug -- "Retry (ink)" --> Node_Compile

Node_Debug -- "Retry (json)" --> Node_Outline

Node_Debug -- "Critical Fail" --> Fail((Exit))

%% Step 4: Functional QA & Final Review

Node_Compile -- "Success" --> Node_QA[ink_qa]

A7 -.-> Node_QA

S5 --> Node_QA

Node_QA -- "Pathing Error" --> Node_Script

Node_QA -- "Passed" --> Node_Final_Rev[ink_auditor]

A8 -.-> Node_Final_Rev

S5 --> Node_Final_Rev

Node_Final_Rev -- "Structural Error" --> Node_Script

Node_Final_Rev -- "Approved" --> End((Output .Ink))

%% Styling

style Node_Outline fill:#f9f,stroke:#333

style Node_Outline_Res fill:#f9f,stroke:#333,stroke-dasharray:5 5

style Node_Prose fill:#bbf,stroke:#333

style Node_Prose_Res fill:#bbf,stroke:#333,stroke-dasharray:5 5

style Node_Script fill:#dfd,stroke:#333

In summary, a system modeled on the basics of real writing and development sees an Outliner giving our overall story, a Prose Writer making the detailed scenario, Story and Technical editors ensuring adherence to internal logic and the rules of the fictional world along the way, and then a team of scripters and debuggers to make sure the output will run in the Ink engine.

Orchestrating LLMs can be tricky. You have to set clear guidelines and be prepared to settle disputes. For example, when I first added the story editor, I started getting long loops and failures when trying to make stories where my daughter meets her favorite dragons. Well, the story editor was doing its job: in these books, humans are “scavengers” and generally thought of as a particularly annoying type of snack, so it was right to reject the premise. But it wasn’t enough to tell the story editor to let the user’s premise override the world rules; evidently that got lost in the complexity of its task. So I had to add an escalation node where the context is limited to the story editor’s objection and the user’s premise; in this limited context, it was very easy to resolve that the objection was based on the premise, and the premise wins, even with a very lightweight model.

Speaking of – there’s a wide range of models at play in Z-Forge, and you can configure all of them. For example, the prose is generated by Sonnet by default, but gemini-flash-lite does the first round of debugging should there be a compilation error in an Ink script. A couple of the underdogs like qwen and llama are in there, too, but you can take your pick from OpenAI, Gemini, Anthropic, and Groq, BYO API keys. Different models do different tasks well, and vendors are shipping new ones faster than anyone can benchmark them. A smart system stays ready to adapt.

How it’s Built

Not by me, as I hinted at before. It’s a collaboration between a silicon lead developer and a silicon outsource team using techniques modeled on Donald Knuth and Margaret Hamilton. I call the process Literate Agentic Programming. Phase 1 is collaboration to build specifications in the IDE with a silicon lead developer; phase 2 is agentic implementation from the spec at the CLI by a silicon outsource team.

flowchart TD

subgraph Personas

dev[Human Developer]

ide["Silicon lead (LLM in IDE)"]

cli["Silicon outsource team (LLM in CLI)"]

end

subgraph Repositories

subgraph git

instr[Agent instructions]

spec[Specification files in Markdown]

code[Source Code]

end

end

%% Process flow

start((Human initializes project)) -->

1[Human writes instructions, basic spec, README] -->

2[Human collaborates with LLM in IDE to refine specs] -->

3[Human asks silicon lead to hand off for implementation] -->

4[Silicon team implements app/feature/enhancement per prompt, spec] -->

5[Human runs app for testing] -- No bugs --> done(( ))

5 -- Bugs found -->

6[Human debugs with LLM assistance] -- Spec still good -->

7[Human and/or silicon lead debugs] --> done

6 -- Spec needs update -->

8[Human and silicon lead collaborate on spec update] -- Spec changes minimal -->

9[Silicon lead fixes directly] --> done

8 -- Significant spec changes --> 4

%% Flow parts

dev .-> 1

1 .-> spec

1 .-> instr

dev .-> 2

ide .-> 2

2 <.-> spec

4 .-> code

7 .-> code

%% instr --> ide

%% instr --> cli

%% dev .-> instr

%% dev .-> spec

%% spec <.-> ide

%% spec .-> cli

As the carbon-based solo developer, GitHub Copilot (and in some later updates, the open-source “continue”) is my silicon lead developer, working with me in the IDE. I am responsible for determining a problem statement or goal. I write some specs directly in Markdown format. I also discuss them in detail with the silicon lead dev, primarily using Sonnet. I provided some detailed agent instructions (visible in the GitHub repo) that essentially tell it, you’re no junior coder; focus on making good specifications so that software can be built, but don’t build it unless you’re asked for a minor change that’s still in spec, or a bug fix.

From there, the silicon lead dev provides a prompt that I paste into Copilot CLI (possibly the open source “aider” in a future update). The agent instructions tell the CLI tool that it is responsible for implementation and must do so based on the written specifications. This part is a more straightforward application of the off-the-shelf tools, and it’s what the industry hadn’t provided last year when I tried to make the “FEW” spec-driven process work using my homebrew python scripts.

How effective is the process? When I already had a build in flutter/dart that used multi-agent orchestration via a homebrew state machine and custom MCP server, and I wanted to pivot to python to leverage the superior AI stack available, the whole thing was rewritten in about an hour. Yes, it’s a small app, but I didn’t have to write a single line of code to change the language and UI framework and reduce the code footprint by leveraging applicable libraries. That’s a game-changer, and it speaks to how important good software engineering practices are.

Note that this covers the most basic solo development scenario. With specifications becoming essentially a form of code, and well-written test cases being essentially a form of specification, the process scales up well to include input from QA and BA resources. It lends well to two phases of carbon-based developers as well, with a lead doing high-level specifications and individual contributors getting closer to the metal.

You know that old picture of Margaret Hamilton standing next to a print-out of the Apollo guidance system code that’s taller than her? Well, it’s not all computer code. Maybe 10% of it is. The rest of that is specifications, proofs, and documentation. The woman who coined the term “software engineering” showed us from the beginning that explaining and validating your process is vital to actually making useful code. Her methods got us to the moon on hardware that would be jealous of an Apple //C.

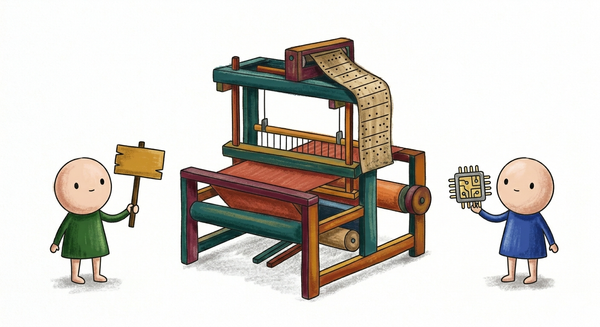

Donald Knuth tried to formalize her concepts with a paradigm he called Literate Programming. The essential idea is that you write in detailed natural language your problem statement and your solution. You put a little bit of code into that documentation where the ambiguities of natural language just won’t suffice (we remember Turing and Church for a reason, after all). Then you only write code to, essentially, explain all this to the computer.

That last bit is where LLMs become so effective as software development partners. We still need to do our due diligence in explaining what we actually want to build in an unambiguous way. Agentic development tools can’t replace a whole team, and they can’t institute good engineering practices. But they can really shine in an environment where good engineering practices are followed.

I recommend the following KPI’s for teams following the Literate Agentic Programming paradigm, in honor of two of the greats:

One Hamilton (Hm) is about a million lines of combined code, technical specifications, and other documentation used in designing code, as a single combined repository. That’s from a wild guess at the total lines in the famous picture.

The Knuth Index (KI) is a scale from 0 to 1 based on the ratio of natural language to code in the repository. It’s only valid if the code passes quality and acceptance testing – nobody cares about your spec if your product doesn’t work!

Welcome to the future, developers. The coding jobs are disappearing. The engineering jobs are going to explode. Are you ready?